Speculative Decoding, Simply Explained

Learn how Speculative Decoding works from scratch and how to use it in your AI applications for faster and cheaper inference.

LLM inference is slow, memory-heavy, and computationally expensive.

LLMs are autoregressive and produce one token at a time, and the LLMs in use today also contain multiple trillion parameters. This means that producing just one token from these requires trillions of computations!

In 2022, Google published a research paper called ‘Fast Inference from Transformers via Speculative Decoding’ where they introduced an inference technique called Speculative Decoding.

This technique claimed to increase inference speeds by 2-3x without compromising LLM output quality, and since then, it has been used by AI overviews in Google Search (and every other modern LLM application) to speed up inference.

What really makes Inference slow?

You might assume that performing trillions of computations to produce a single token is what limits the inference speed, but this is a big misconception!

GPUs and TPUs in use today are powerful parallel computing machines and can perform hundreds of trillions of operations per second. This is often more than 100x higher than what’s needed to generate a token through an LLM.

So what’s the real bottleneck?

To produce a single token, the GPU must read all the model’s parameters from high-bandwidth memory (HBM) into the compute units. This process is painfully slow and leaves the compute units sitting idle while they wait for weights to arrive.

This makes the process memory-bound rather than compute-bound.

(LLM inference is very different from LLM training, where you load the parameters once and reuse them across thousands of operations on a large batch of training samples.)

Insight 1: This means that we have all that extra compute sitting there to be used.

Is predicting all tokens really that tough?

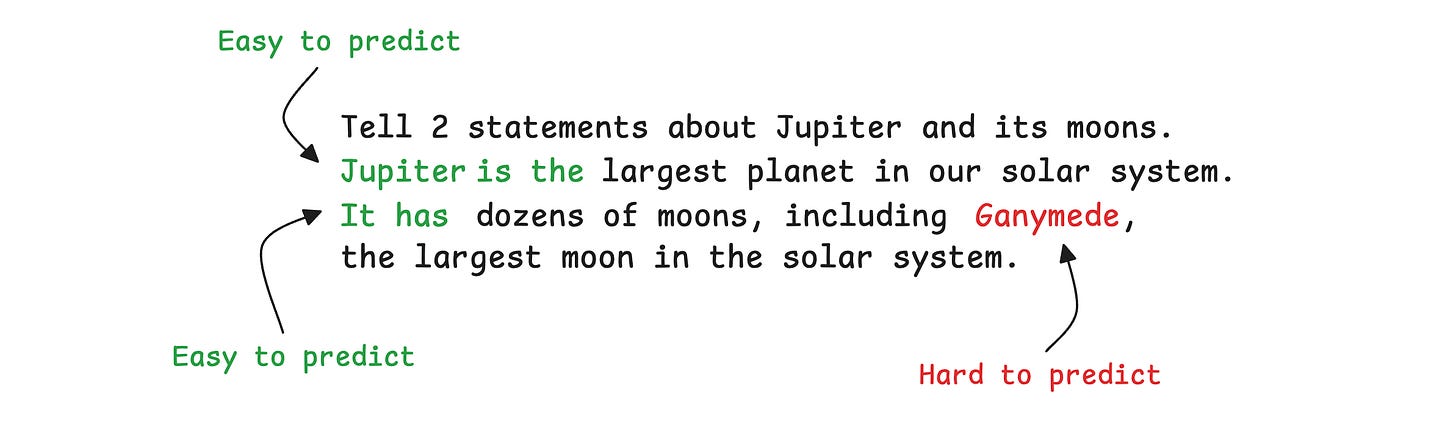

Consider this prompt and response pair:

"Tell 2 statements about Jupiter and its moons. Jupiter is the largest planet in our solar system. It has dozens of moons, including Ganymede, the largest moon in the solar system."

In this statement, considering words as tokens, some tokens, such as “Jupiter”, “is”, and “the”, are easier to predict/ generate, while others, such as “Ganymede”, are harder.

This is because the LLM has already seen “Jupiter” in its prompt, and in the positions that follow, "is" and "the" have few competing alternatives.

For “Ganymede”, its preceding context, "dozens of moons, including” leads to many possible valid completions.

Insight 2: We do not always need a powerful LLM to produce tokens that are easier to predict.

How does Speculative Decoding use these ideas?