🗓️ This Week In AI Research (22-28 March 26)

The top 10 AI research papers that you must know about this week.

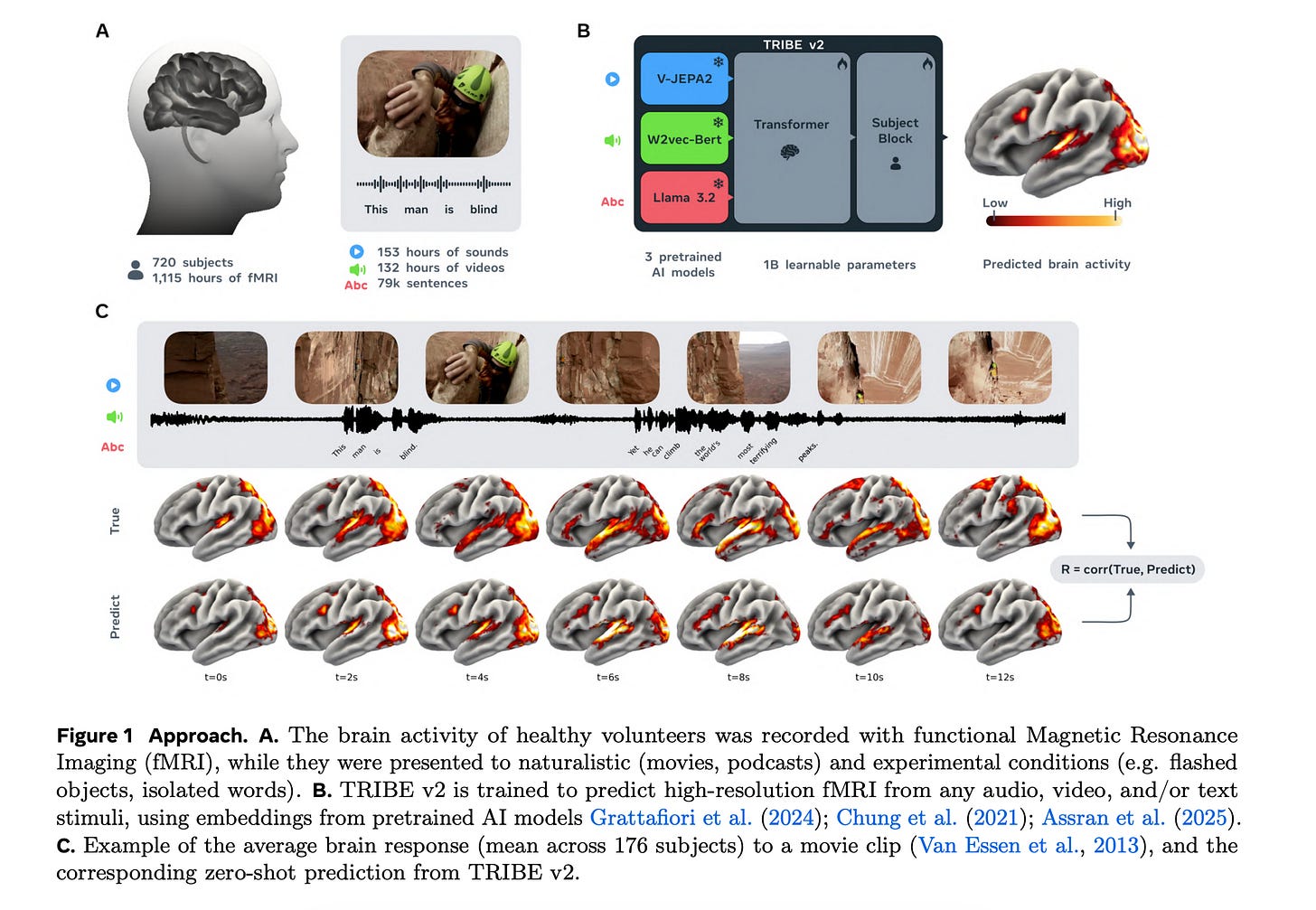

1. TRIBE v2

This research paper from Meta introduces TRIBE v2, a tri-modal (video, audio, and language) foundation model capable of predicting human brain activity across a variety of naturalistic and experimental conditions.

Trained on a unified dataset of over 1,000 hours of fMRI across 720 subjects, the model accurately predicts high-resolution brain responses to new stimuli, tasks, and subjects, exceeding the performance of traditional linear encoding models and delivering multi-fold improvements in accuracy.

Additionally, TRIBE v2 enables in silico experimentation. Tested on seminal visual and neuro-linguistic paradigms, it recovers a variety of results established by decades of empirical research.

Finally, by extracting interpretable latent features, TRIBE v2 reveals the fine-grained topography of multisensory integration.

Read more about this research using this link.

Join the paid tier today to get access to all posts on this newsletter, including:

🦞 Build OpenClaw from Scratch (upcoming)

and hundreds more!

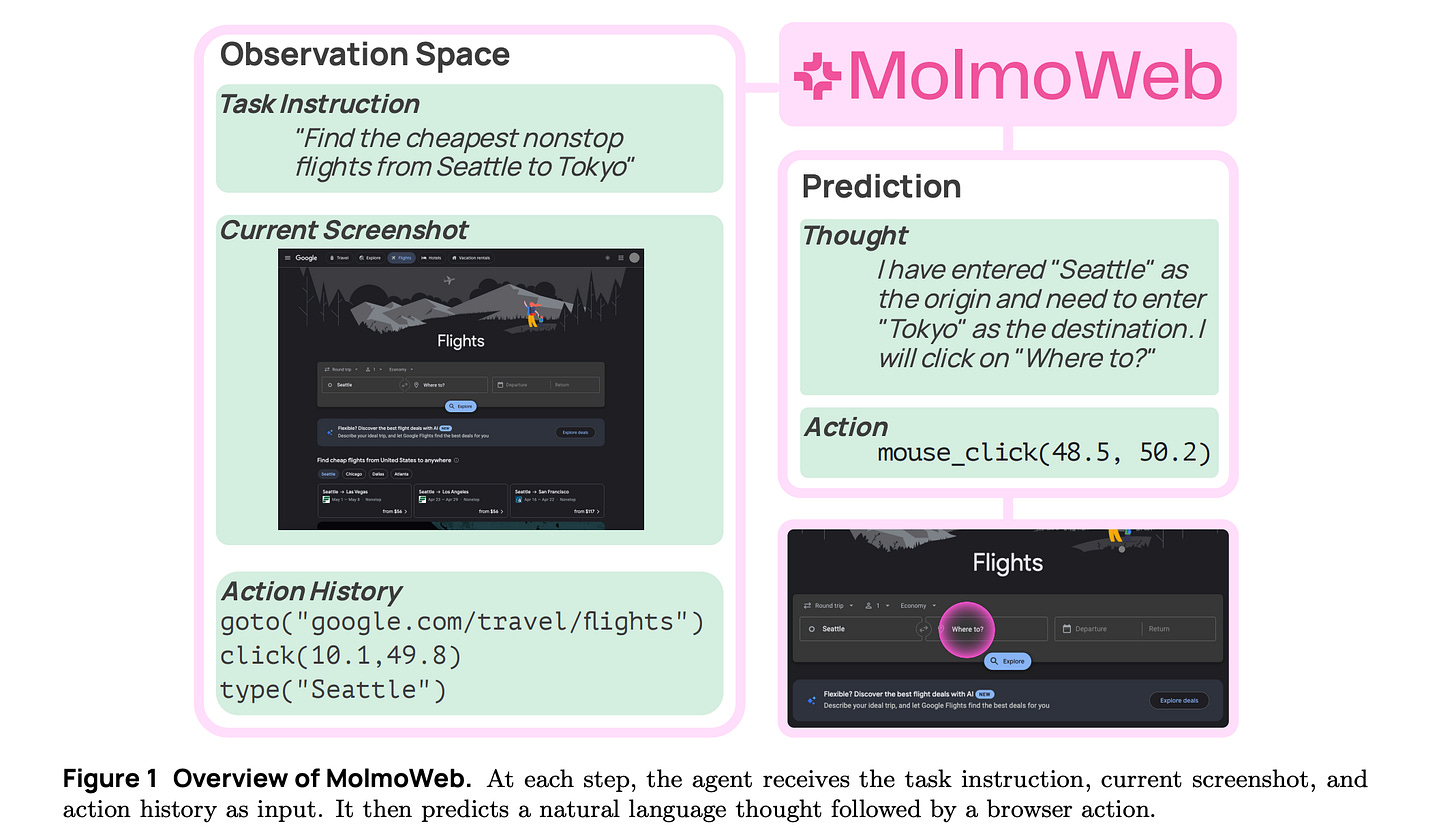

2. MolmoWeb

This research from Allen AI introduces MolmoWeb, two completely open-sourced visual web agents built on Molmo 2, along with the weights, training data (MolmoWebMix), code, and evaluation tools used to build them.

Given a task instruction and a live webpage, MolmoWeb observes the page through screenshots (without relying on HTML, accessibility trees, or specialized APIs), predicts the next step, and executes browser actions such as clicking, typing, or scrolling.

The 4B and 8B MolmoWeb models achieve state-of-the-art results among open-weight web agents, such as Fara-7B. They also outperform agents built on much larger proprietary models like GPT-4o that rely on both annotated screenshots and structured page data.

Read more about this research using this link.

3. SAM 3.1