11 Infographics on the Dark Side of AI Kept Hidden From You

From copyright breaches to missed emergencies, deepfakes to job cuts, here are 11 alarming facts backed by real studies that the AI industry keeps quiet about.

👋🏻 Hey friends!

Artificial Intelligence is one of the greatest technologies of our lifetime. But there’s a dark side to it which you might not be aware of.

In this article, I present 11 infographics that tell the flaws and risks associated with current AI systems.

Get ready to have your mind blown! 🤯

🤝 Before we begin, I want to introduce you to Emma McIlwaine, the brilliant graphic designer and illustrator behind this newsletter’s infographics.

Check out her designs on her website, follow her on LinkedIn, and reach out to work with her.

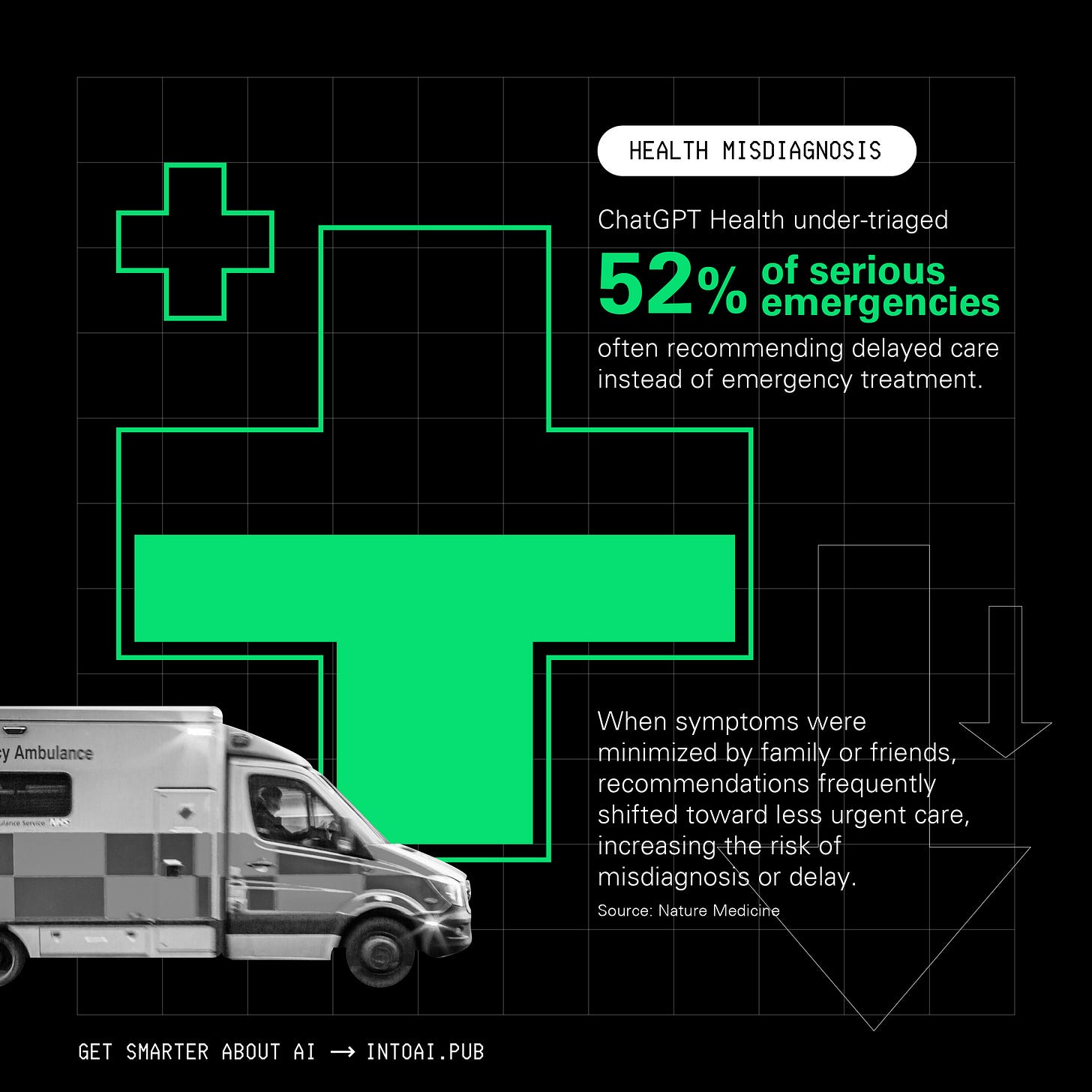

1. ChatGPT Health would cause serious harm if deployed in hospitals

ChatGPT Health today answers health queries for millions of people around the world, but is it ready to do so?

Maybe not. A recent study published in Nature Medicine showed that:

It under-traiged 52% of medical emergencies, such as Diabetic ketoacidosis and impending Respiratory failure, to a 24–48-hour evaluation rather than the emergency department.

When a patient’s symptoms were downplayed by friends or family, the triage recommendations shifted towards less urgent care.

When people expressed suicidal thoughts with a specific method (which means a higher risk clinically), crisis interventional messages to get urgent help were less likely to be shown.

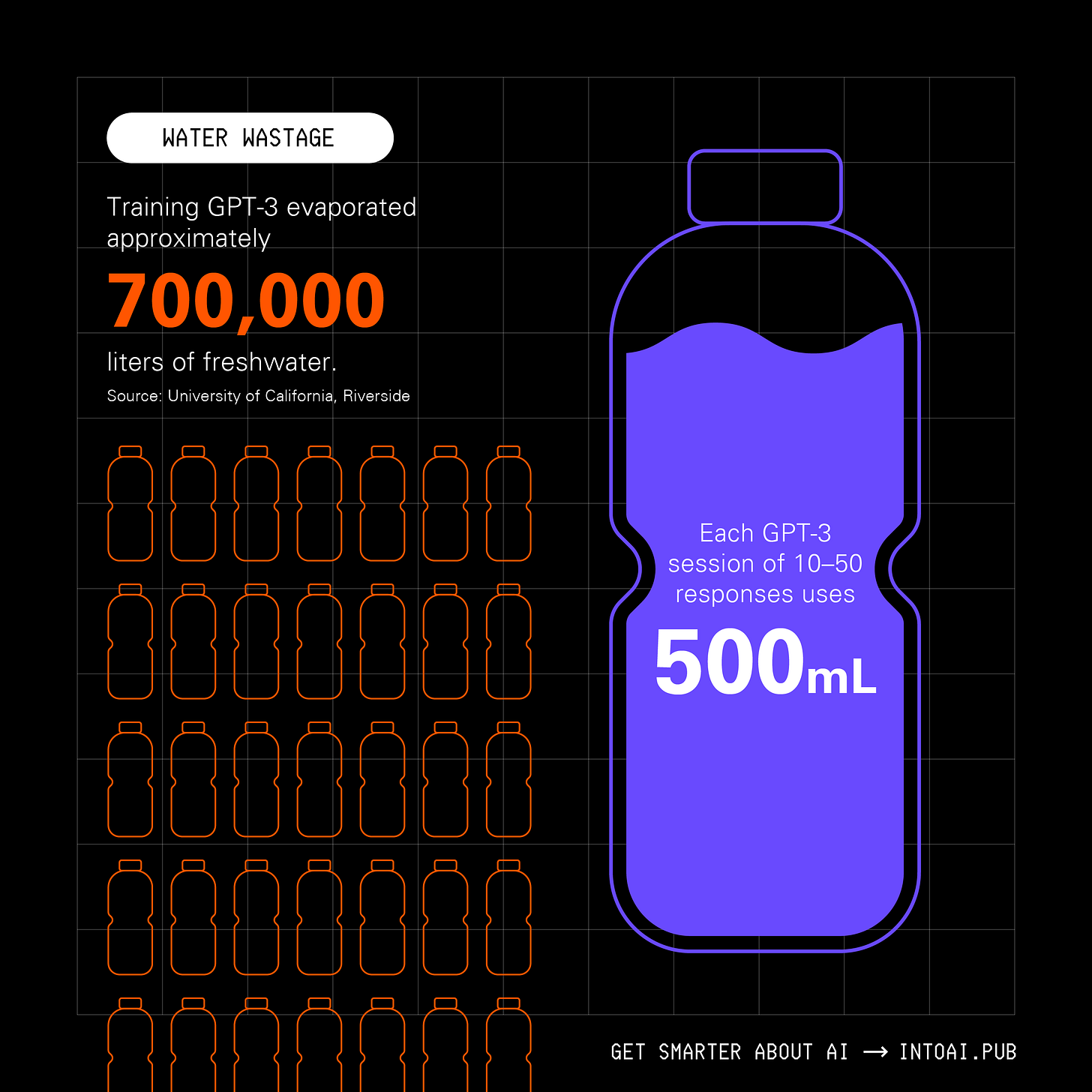

2. AI is drinking the world dry

According to the World Bank, 4 billion people live in water-scarce areas today.

By 2030, global demand for water will exceed sustainable supply by as much as 40%, and 1.6 billion people will not have access to safe drinking water.

At present, the AI economy requires 23 cubic km of water a year. By 2050, this is predicted to more than double to more than 54 cubic kilometers.

Unfortunately, this means that our world will run out and will need to find an extra 31 cubic kilometers of water per year to run the AI economy. That’s enough to supply every human being on Earth with an extra 3,820 liters of freshwater a year.

According to another study, training GPT-3 could have consumed a total of 5.4 million liters of water, with 700,000 liters of clean freshwater evaporated directly in the training data centers.

Alongside this, GPT-3 needs to “drink” a 500ml bottle of water for roughly 10-50 medium-length responses, depending on when and where it is deployed.

Information about the water consumption of our current AI systems is largely kept a secret.

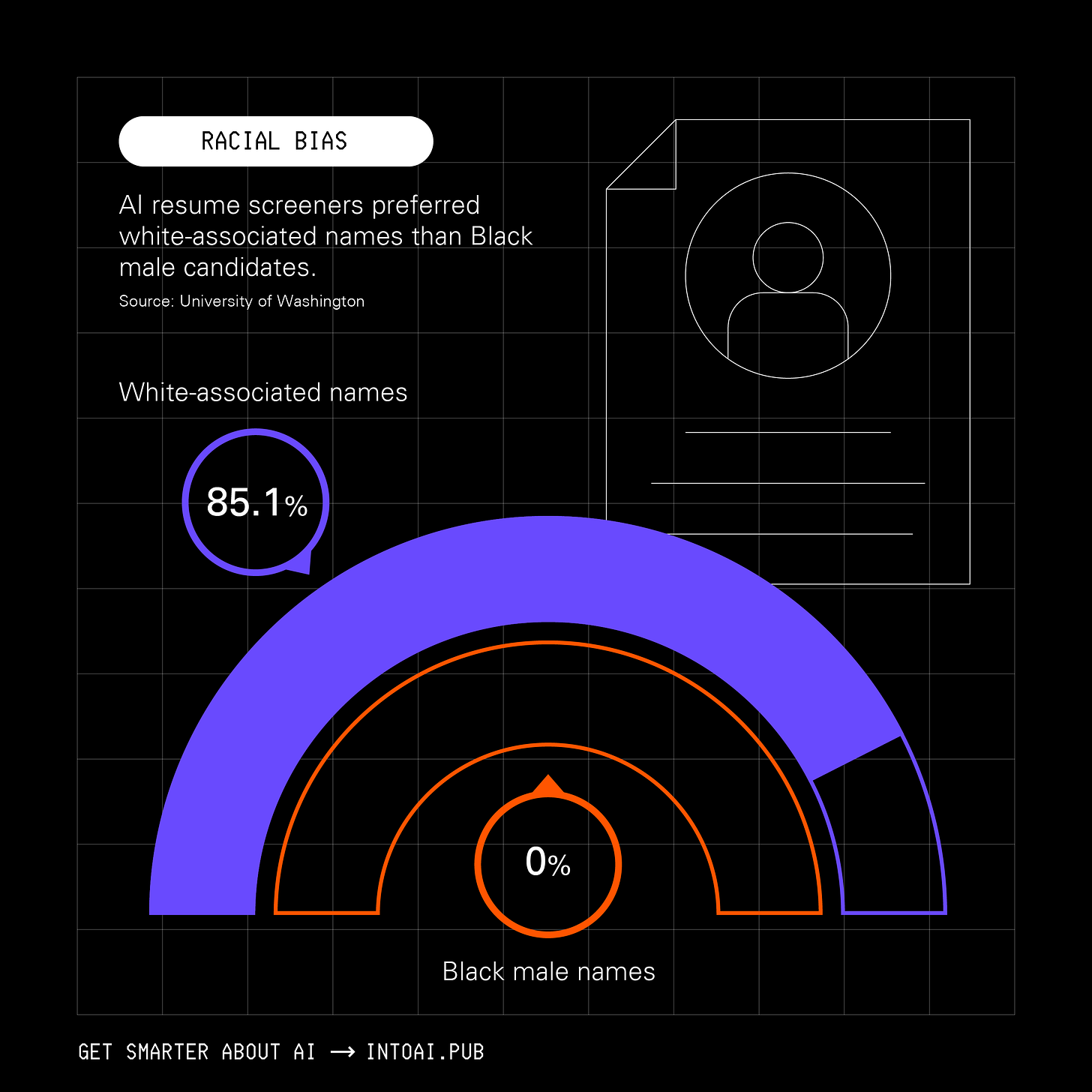

3. AI has a race problem

A study from Lehigh University found that LLMs consistently recommended denying more loans and charging higher interest rates to Black applicants than to otherwise identical white applicants.

The study also found that black applicants would need credit scores ~120 points higher than white applicants to receive the same approval rate, and ~30 points higher to receive the same interest rate.

Another study from the University of Washington revealed that AI resume screeners preferred white-associated names in 85.1% of cases, and Black male candidates were preferred 0% of the time.

Read it again: It’s 0%.

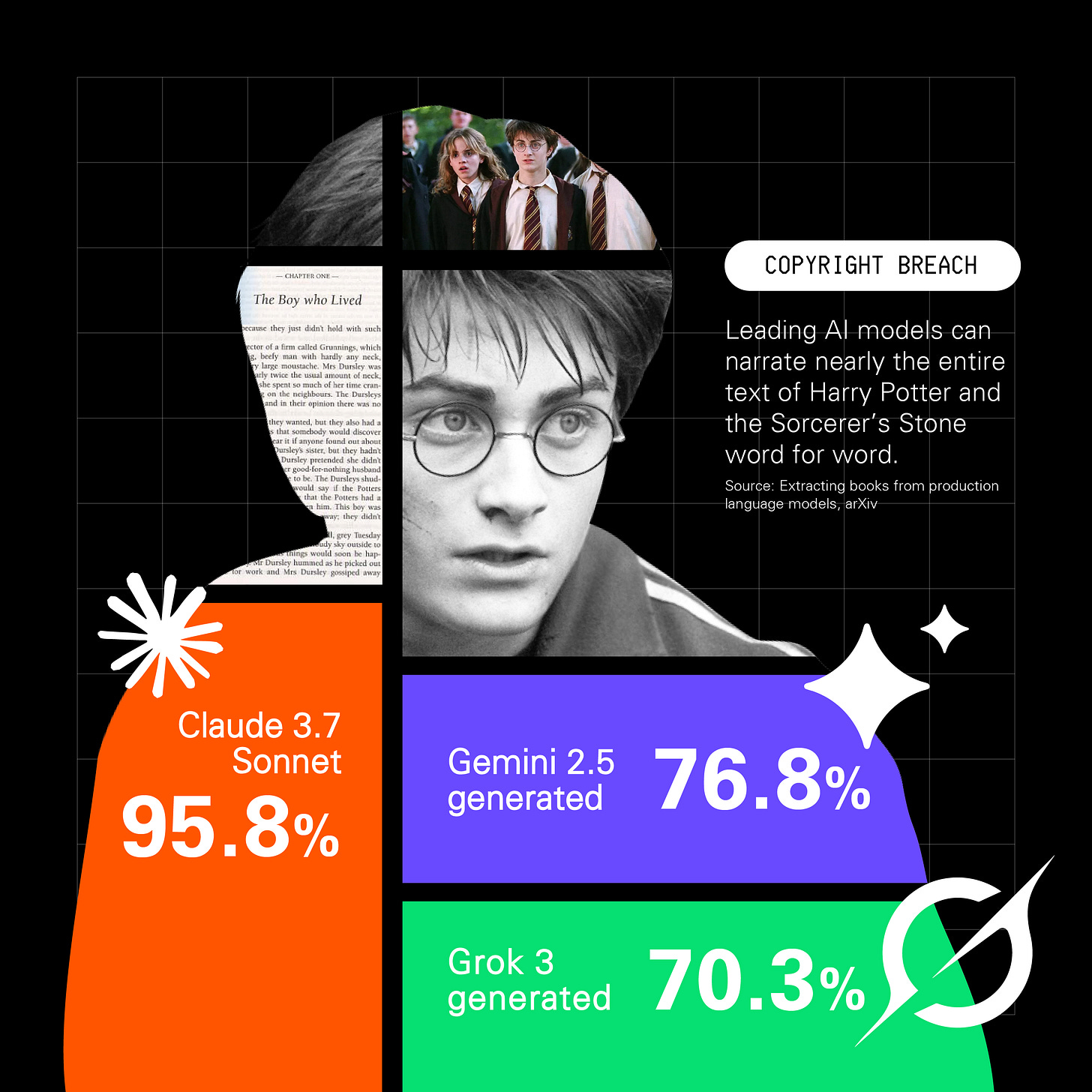

4. Copyright? What’s that?

Anthropic recently released a detailed report on how three Chinese AI labs used ~24,000 fraudulent accounts to extract their state-of-the-art AI model, Claude’s capabilities to improve their own models.

But has Anthopic always been fair?

A recent study revealed that current LLMs can narrate almost the entire text of ‘Harry Potter and the Sorcerer’s Stone’ word for word, with Claude 3.7 Sonnet reaching the highest recall of 95.8%, followed by Gemini 2.5 Pro (76.8%) and Grok 3 (70.3%).

5. Yes, AI is really coming for your job

Former CEO of Twitter/ X and current CEO of Block, Jack Dorsey, fired 4,000 employees this week, saying AI can work faster and better.

“We're already seeing that the intelligence tools we’re creating and using, paired with smaller and flatter teams, are enabling a new way of working which fundamentally changes what it means to build and run a company. and that's accelerating rapidly. — Jack”

But this isn’t a one-off event.

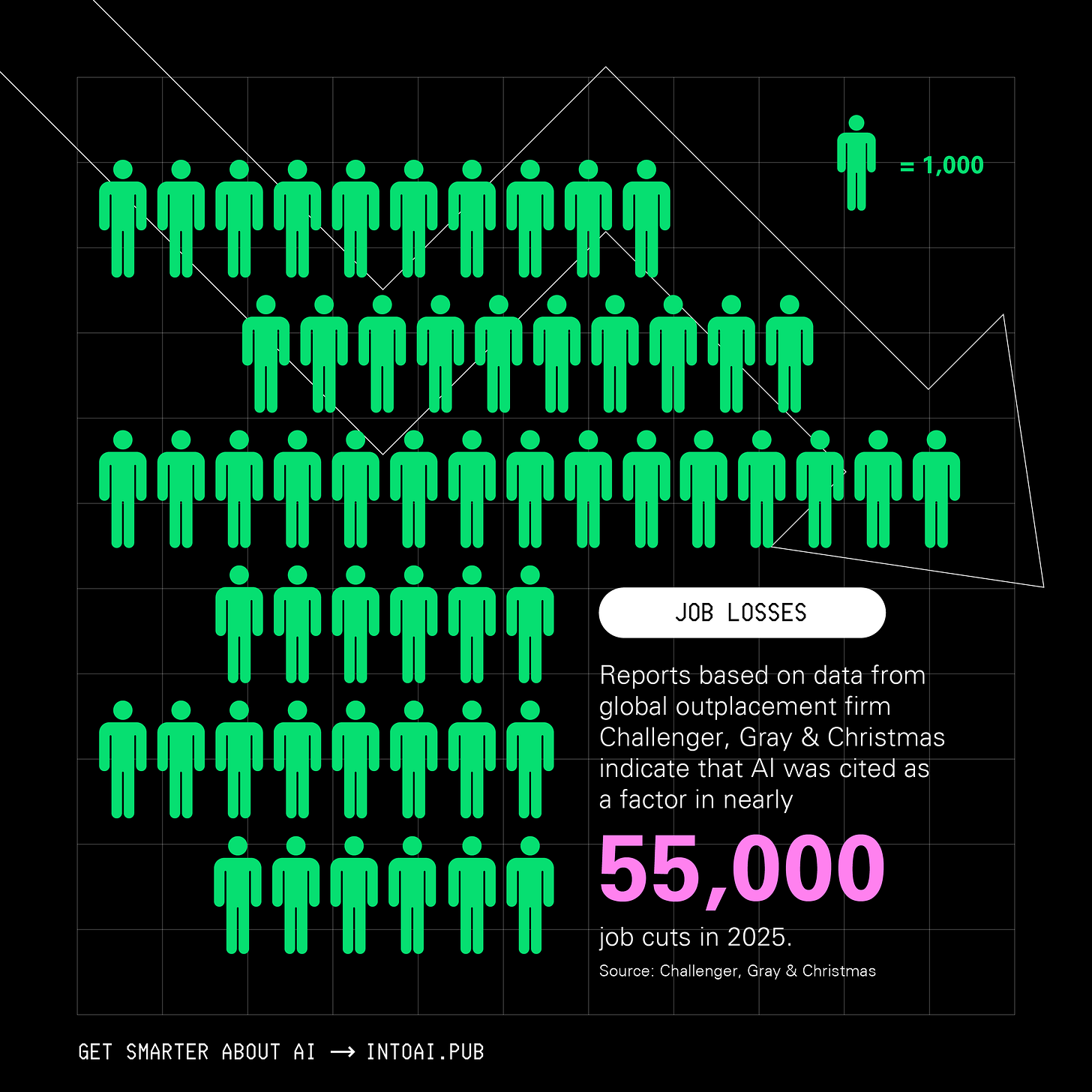

The IMF says that almost 40% of global employment is exposed to AI, rising to 60% in advanced economies.

Moreover, reports from Challenger, Gray & Christmas clearly show that AI caused nearly 55,000 job cuts in the US in 2025 alone.

6. Your passport is in AI training data

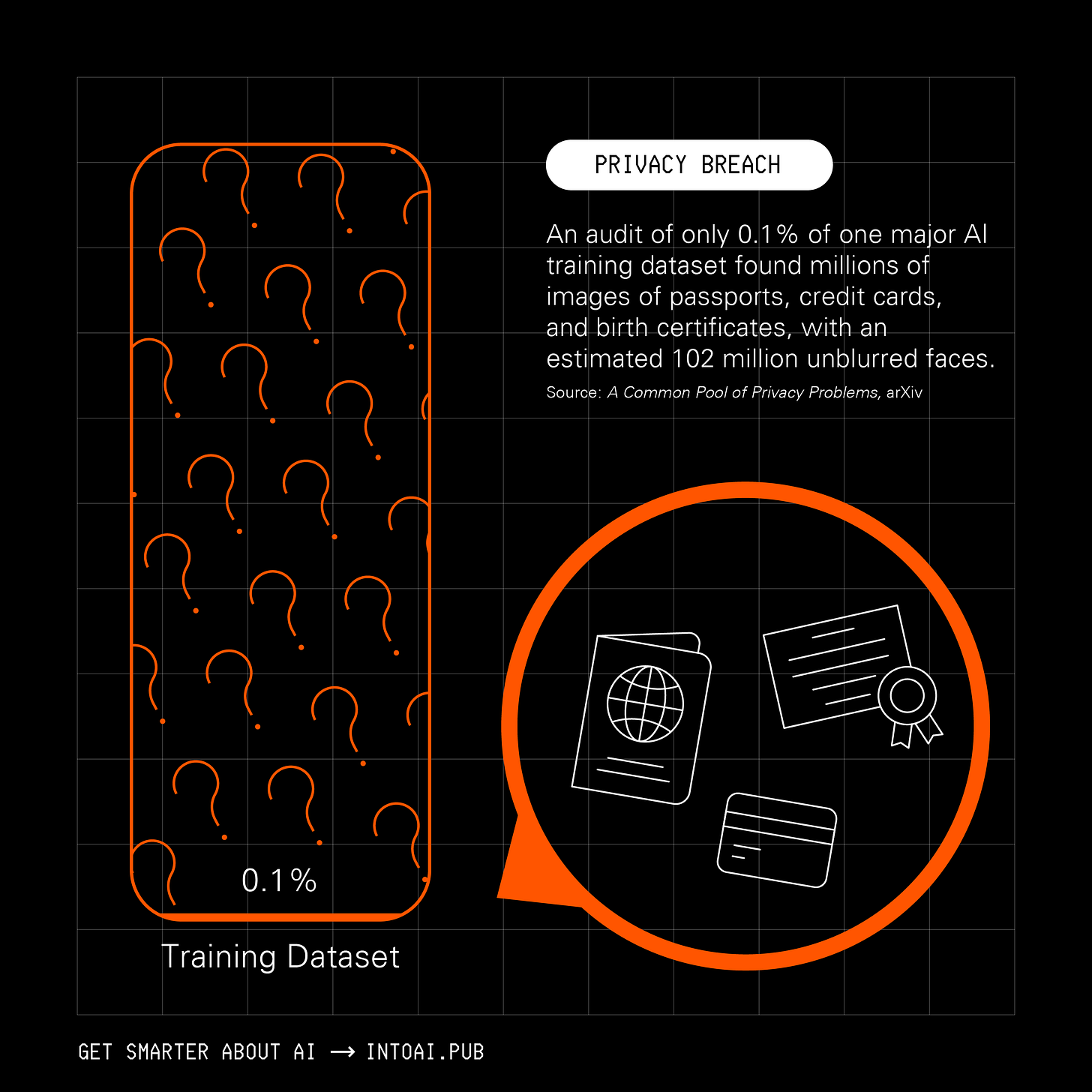

Data privacy in the AI age is largely a myth.

A study that examined 0.1% of a popular large-scale web-scraped dataset used for training AI systems called CommonPool, found millions of images of passports, credit cards, and birth certificates, with an estimated 102 million unblurred faces.

Even examining every sample in the 0.1% was extremely difficult for the researchers, given the size of this dataset. Imagine what the remaining 99.9% could contain?

7. AI is choosing who lives and dies in war

Anthropic has been in debates with the U.S. Department of War over using its AI models to develop systems for mass domestic surveillance and fully autonomous weapons.

That might be great news for America, but many other countries have gone beyond such discussions.

(Update: I’m sorry to tell you that OpenAI has now accepted the above proposal.)

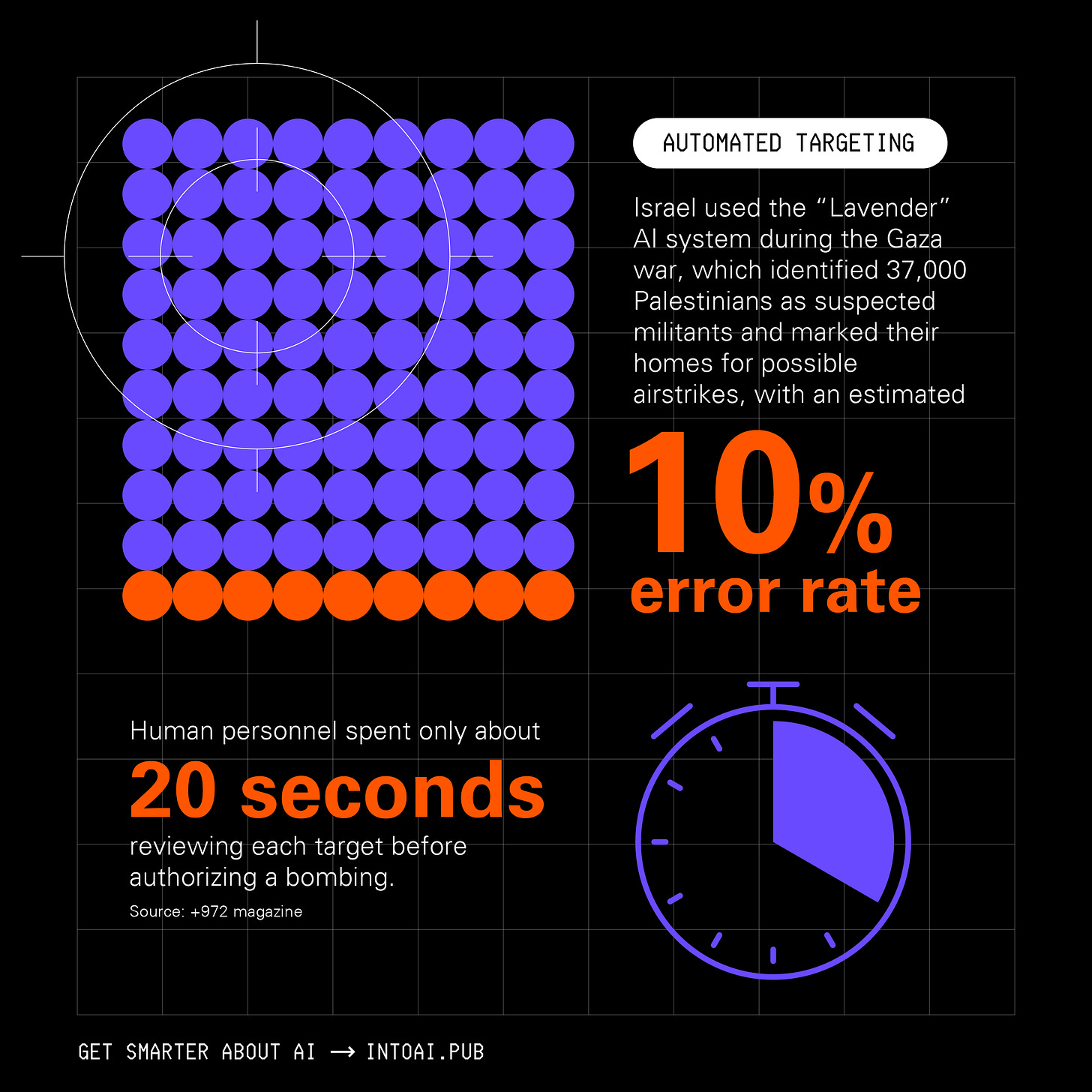

Israel’s defense forces reported using AI systems known as "Lavender" to identify targets in Gaza, and "The Gospel" to help select and prioritize physical strike targets.

According to the +972 Magazine, Israel’s army almost completely relied on "Lavender," which flagged 37,000 Palestinians as suspected militants and their homes for possible air strikes.

Despite knowing that the system makes errors in approximately 10% of cases, there was no requirement to check why the AI system made its choices.

Once the target was identified by “Lavender”, human personnel would take just 20 seconds to authorize a bombing to ensure the marked target was male.

8. Researchers are using AI to write fake science

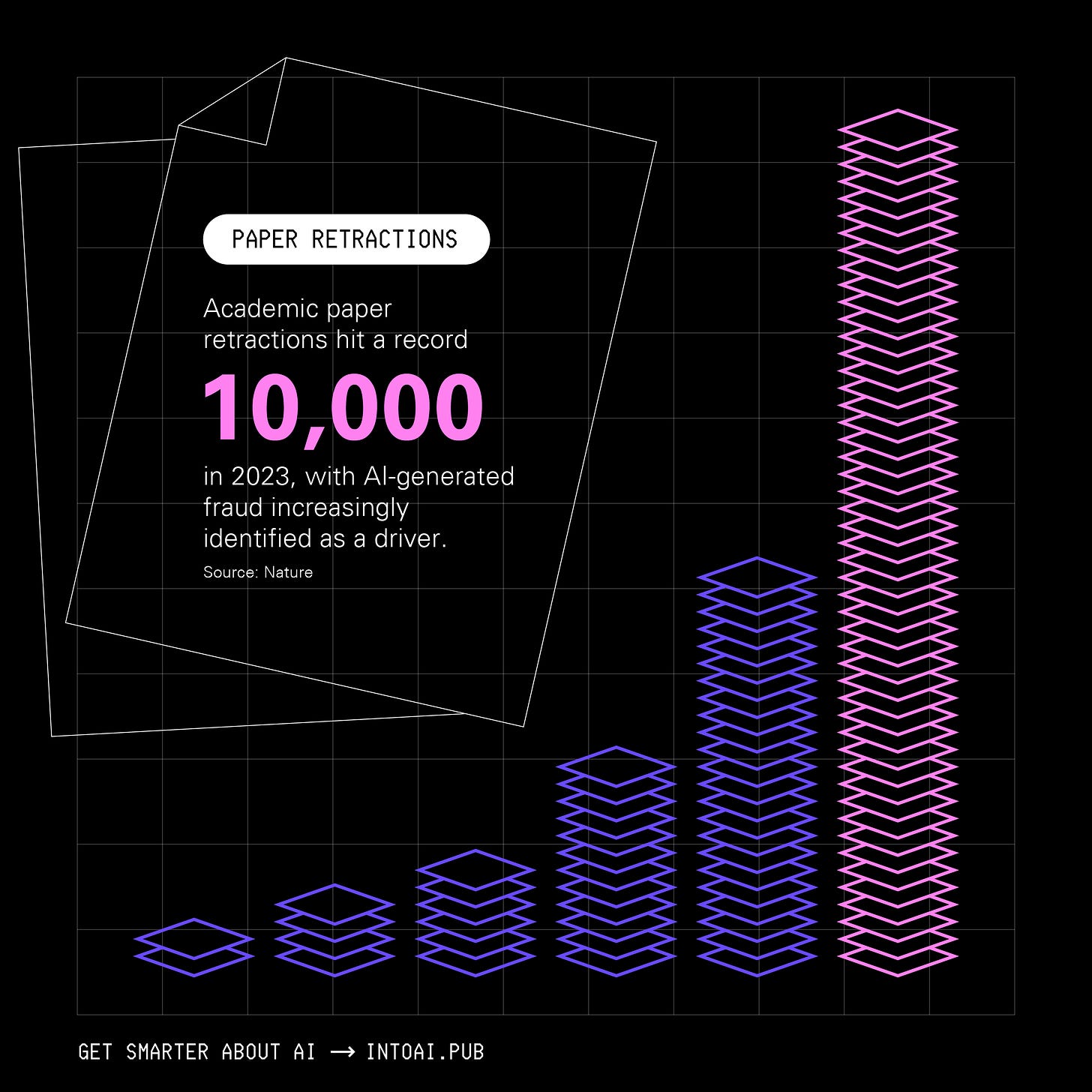

AI-written papers are becoming increasingly common in academia. To add to this, many are even using LLMs to peer-review journal submissions.

A study published in Nature showed that more than 10,000 research papers were retracted in 2023, setting a new record. AI-generated fraud was found to be one of the increasingly common reasons for these retractions.

What happens when results from these fake research papers find their way to products that directly affect your life?

9. Yes, AI might now send you to jail

"AI won't take over for people. But people who use AI will take the place of those who don't.” — Sam Altman

Many have held on to this quote quite passionately despite knowing what AI systems can and cannot do. This includes lawyers as well.

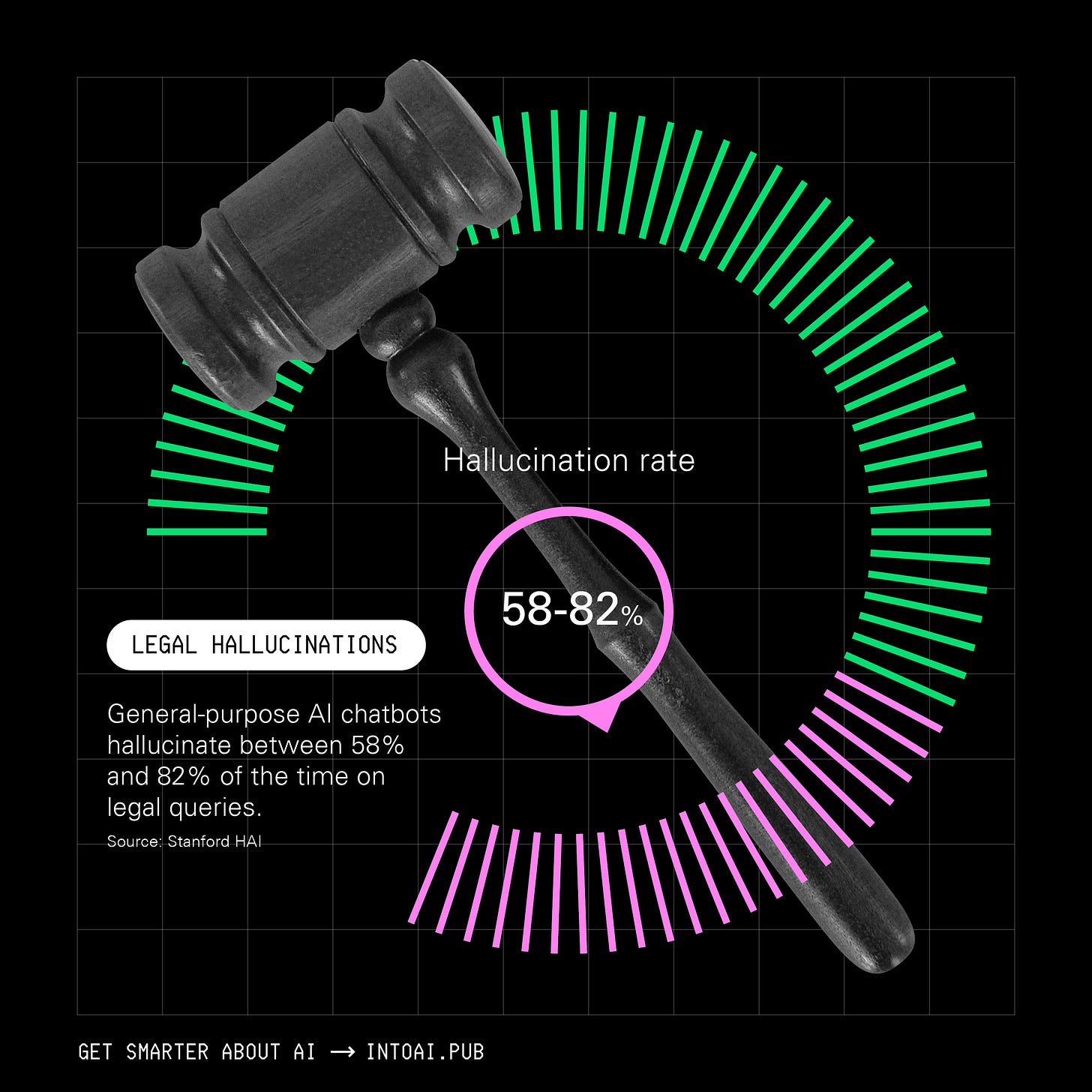

There have been more than 900 cases worldwide where AI-generated hallucinated content appeared in court filings.

According to another study by Stanford HAI, general-purpose AI chatbots hallucinate between 58% and 82% of the time in responses to legal queries.

A single hallucination like this is enough to cause a wrong legal judgment and send someone to jail.

God bless us all.

10. The world lives in the dark as AI electrifies

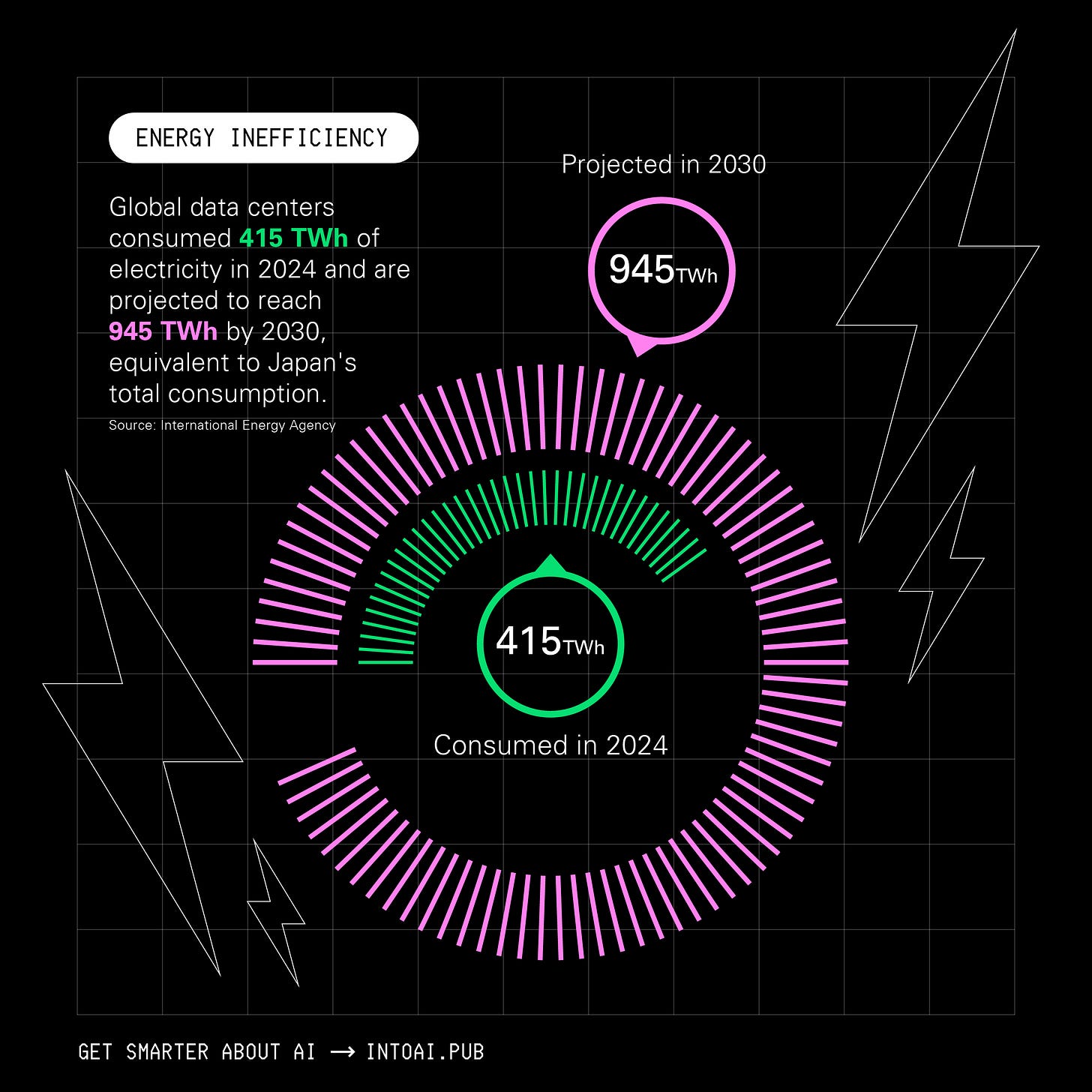

Data from the International Energy Agency (IEA) shows that 730 million people worldwide still lacked access to electricity in 2024.

Global data centers, on the other hand, consumed 415 TWh of electricity in 2024 and are projected to reach 945 TWh by 2030. This is more than Japan's total electricity consumption today!

11. Deepfakes are here to steal your identity

Deepfakes sounded like a thing of the future a few years ago, but such a dystopian future is already here.

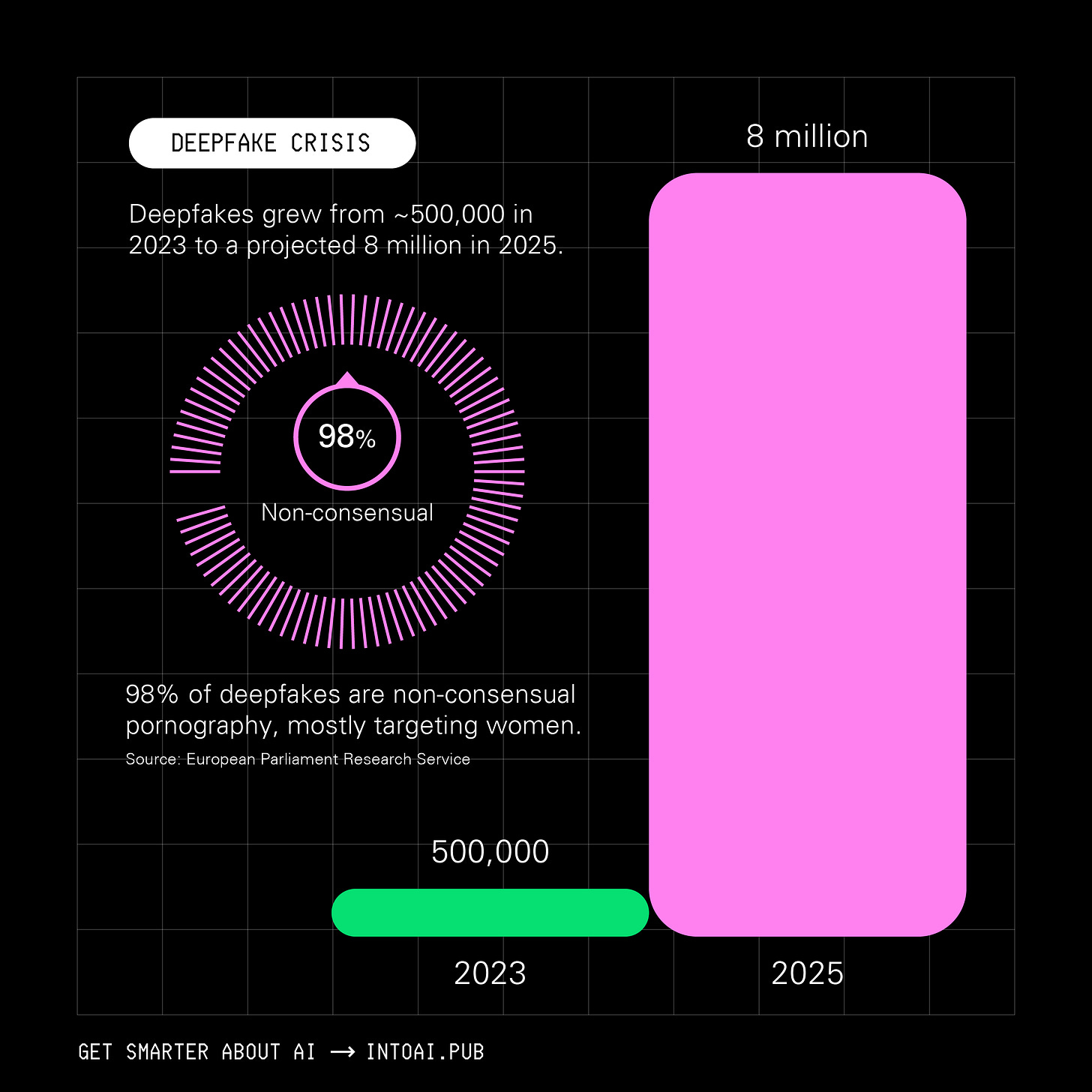

According to a report from the European Parliament Research Service (EPRS), Deepfakes grew from ~500,000 in 2023 to a projected 8 million in 2025.

98% of them were non-consensual pornography and mostly targeted women.

If you loved reading this article and found it valuable, restack to share it with others and earn some referral rewards. ❤️

Don’t forget to connect with Emma on LinkedIn and reach out to work with her.

If you want to get even more value from this publication, become a paid subscriber to get access to posts like the following and more:

Bye now! 👋🏻